The Equation How Institutions Can Avoid Making False Claims of AI Use

- February 26, 2026

- CBR - Artificial Intelligence

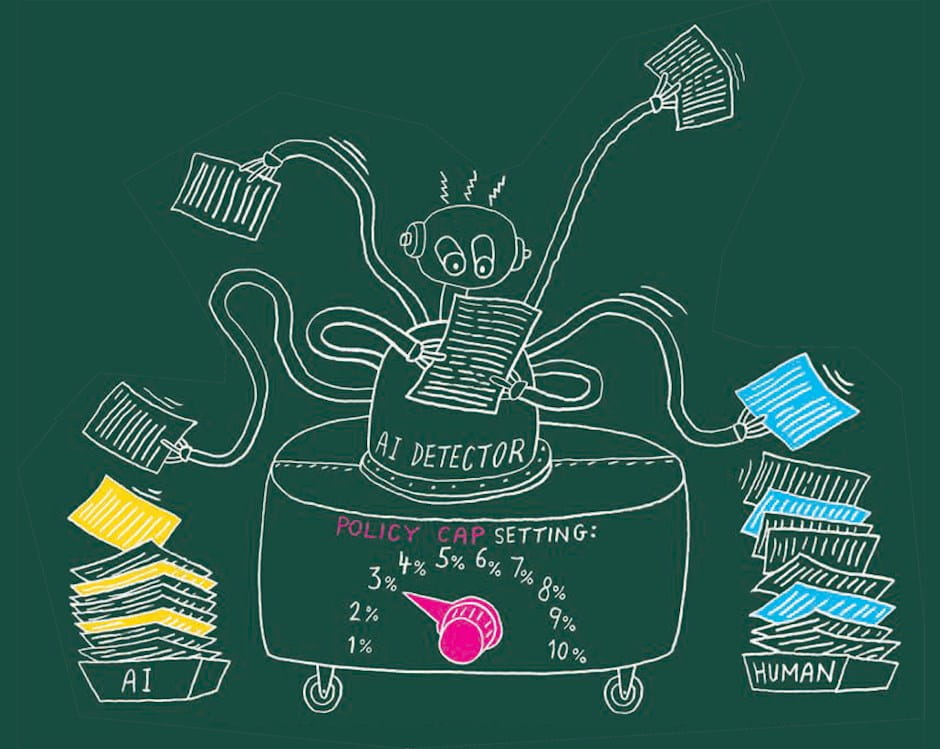

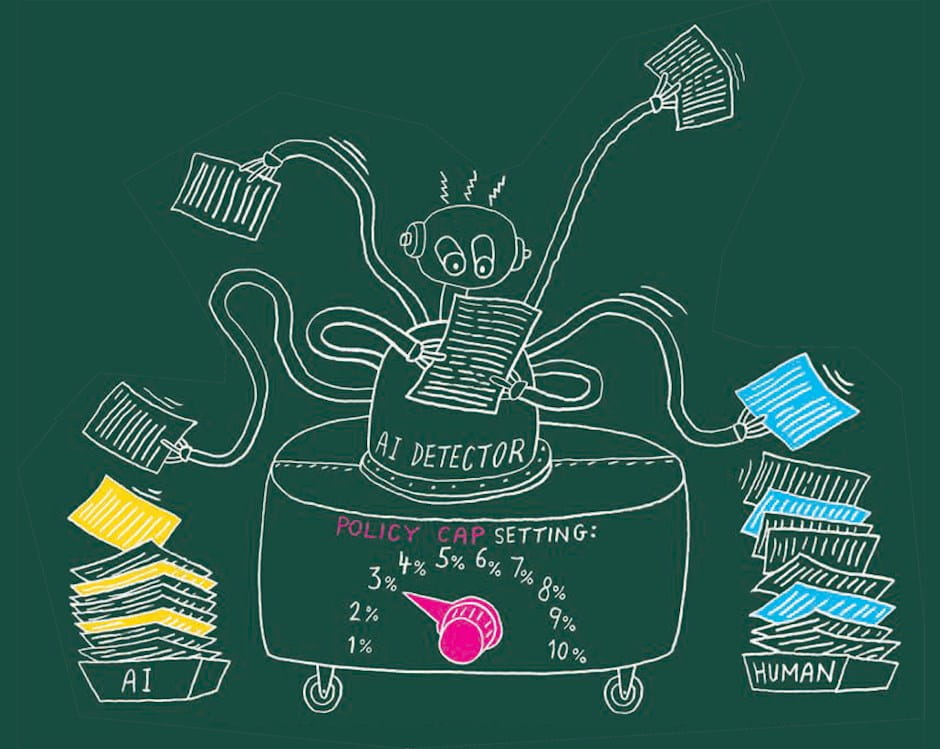

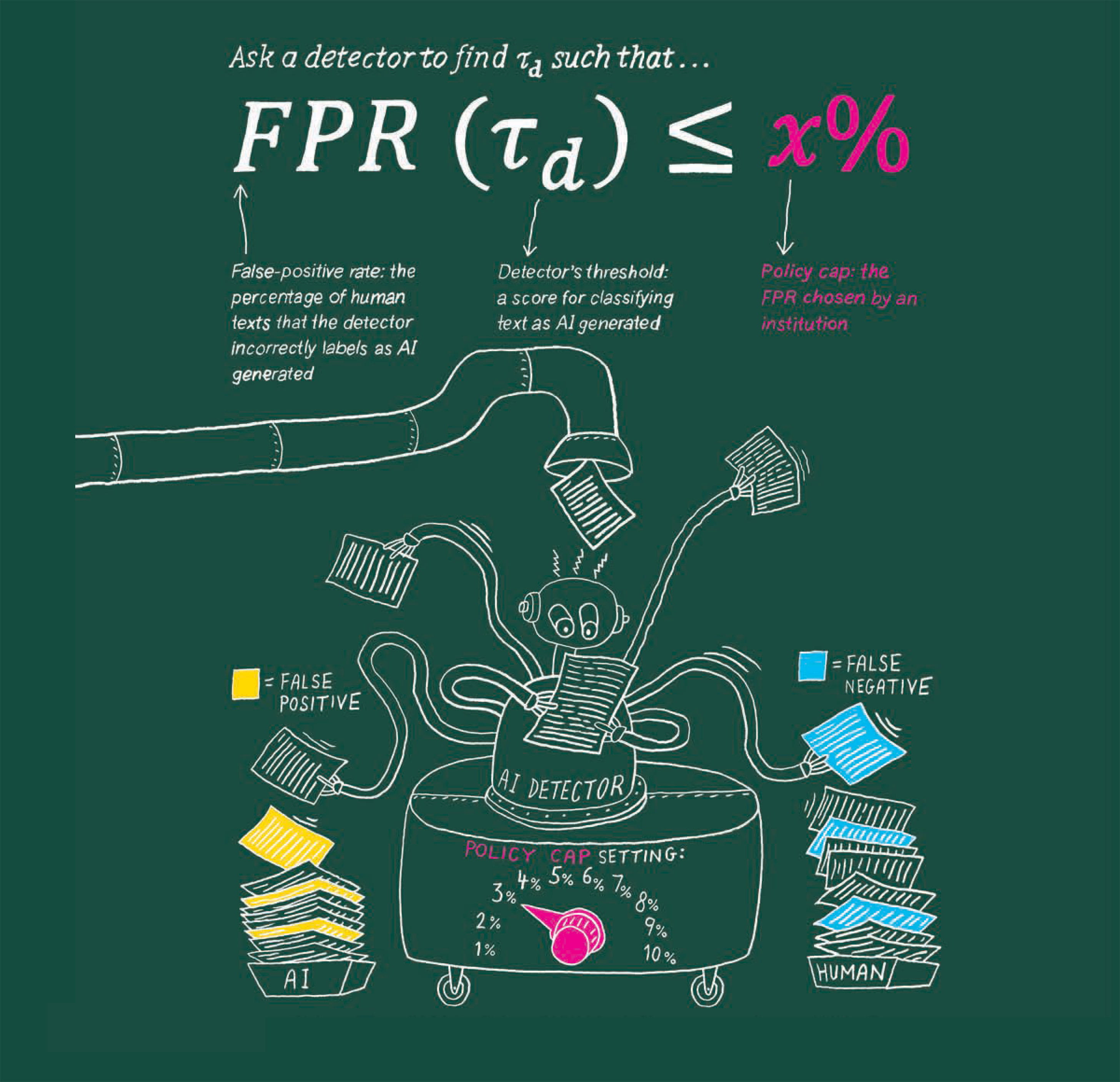

Schools, publishers, employers, and other institutions may want to know whether material was produced by artificial intelligence rather than a human. An AI detector can help, but the technology is imperfect, so institutions have to weigh the potential benefits against the risk of making a false accusation. Chicago Booth principal researcher Brian Jabarian and Booth’s Alex Imas propose a “policy cap” method to assist. First, an institution decides on a policy cap—its maximum acceptable rate of false accusations. Given that limit for a false-positive rate, the detector establishes a threshold or cutoff score for catching AI-generated text. Any text with a score above it is labeled as generated by AI. The institution can then examine the detector’s false-negative rate (the percentage of AI text it fails to detect) at the threshold, to ensure this error rate is also acceptable. A policy cap allows institutions to use any available detector and compare performance across detectors, even though each one relies on a different algorithm. To learn more, read “Do AI Detectors Work Well Enough to Trust?”

Illustration by Peter Arkle

Your Privacy

We want to demonstrate our commitment to your privacy. Please review Chicago Booth's privacy notice, which provides information explaining how and why we collect particular information when you visit our website.